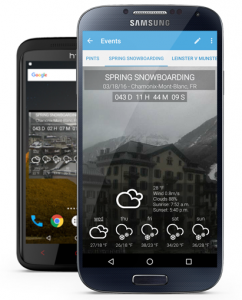

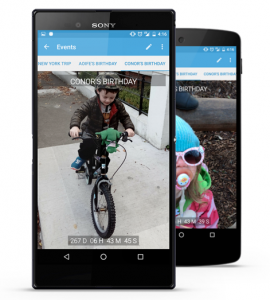

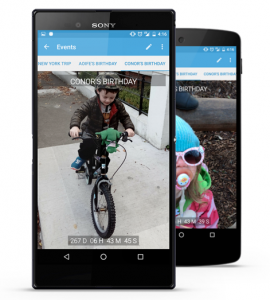

Add the Day is an app I designed for Android. It is designed to count down to any event the user chooses, and allows the user to add a location for that event, the weather in that location, and background images.

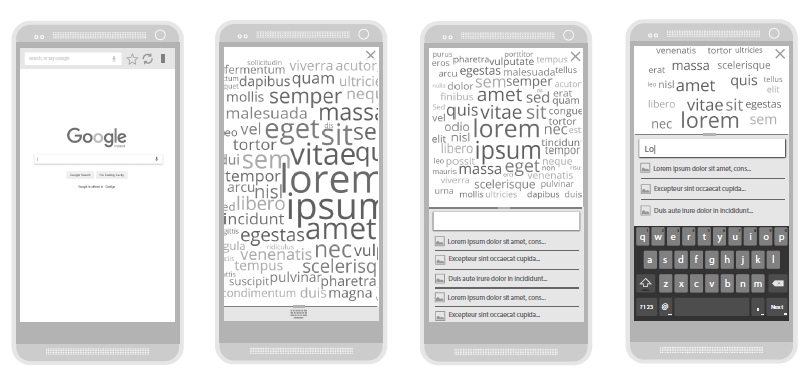

I produced all wireframes and visual assets, and the Android XML layouts.

With Add the Day, the background image can either be a single image taken from the users’ gallery, a slideshow of images taken from their gallery, or a webcam image from the event location. The background slideshow, and the refresh rate of the webcam are both user-configurable, and the background is animated using a Ken Burns effect (a type of panning and zooming effect used on still images), to give it a live feel.

Events can be viewed in full-screen, in an app view, or as home screen widgets – the choice of widgets include:

- 4×3 list view widget

- 3×3 event widget

- 3×3 stack event widget

- 2×2 stack event widget

You can customise your event view to suit the event type. Local weather for sports events and holidays, and you can add local webcams as event backgrounds for a live view.

You can customise your event view to suit the event type. Local weather for sports events and holidays, and you can add local webcams as event backgrounds for a live view.

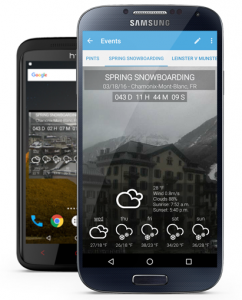

You can see the current weather conditions, and a five day forecast, in the event location on your app view, and on your event widgets.

Add the Day Screen Shots

You can customise your event view to suit the event type. Local weather for sports events and holidays, and you can add local webcams as event backgrounds for a live view.

You can customise your event view to suit the event type. Local weather for sports events and holidays, and you can add local webcams as event backgrounds for a live view.